Most investors view memory chips as a "boom and bust" commodity—you buy when prices crash and sell when factories are full. But as Micron Technology (MU) approaches its Q2 2026 earnings, the old rules have been disrupted. The stock has rallied significantly because Micron is no longer just selling standard computer parts; it is providing the essential "fuel" for the AI revolution. With its 2026 capacity already 100% sold out, the real story isn't just revenue—it’s a staggering jump in profit margins that looks more like a software giant than a hardware maker.

Micron Earnings Preview Q2 2026: What is the Key Focus?

The focus is on High Bandwidth Memory (HBM) demand and margin expansion. Analysts expect a 457% YoY increase in EPS ($8.60–$8.77) driven by HBM capacity being 100% booked for 2026 and gross margins reaching a record 77%.

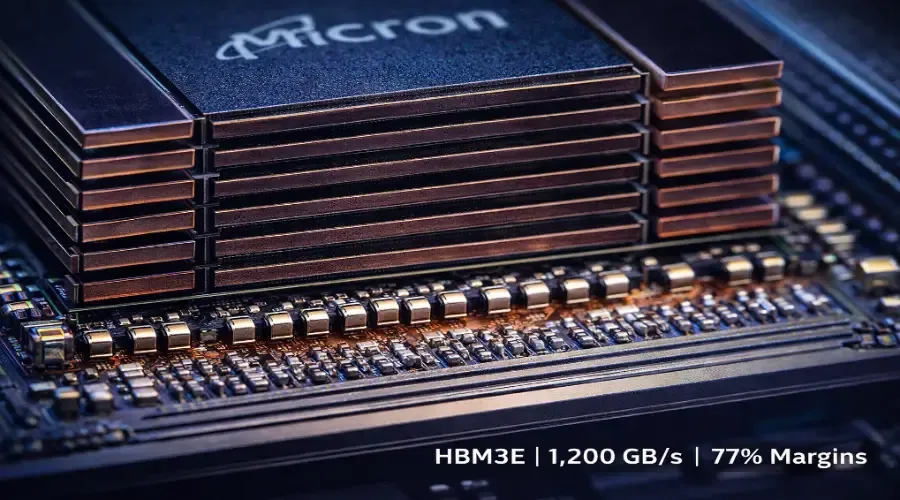

What is High Bandwidth Memory (HBM)?

To understand this Micron earnings preview, you must understand HBM. Think of standard memory (DRAM) like a single-lane road. It works for basic tasks, but it creates a massive traffic jam when you try to move a city's worth of data.

HBM is a vertically stacked memory architecture designed specifically for AI. Instead of spreading chips out, Micron stacks them to deliver speeds of 1,200–1,600 GB/s, compared to just 100 GB/s for standard memory.

Without HBM, the world’s most powerful AI chips would spend more time waiting for data than actually "thinking".

Why HBM is Driving Micron Stock Higher

Sold Out Capacity

Micron's entire 2026 HBM production capacity was booked before the year even began.

Nvidia Alignment

Micron is a confirmed supplier for Nvidia's next-generation Vera Rubin platform, which is built entirely around HBM4.

Margin Explosion

While standard DRAM carries 40–55% margins, HBM is projected to push Micron’s gross margins to 77% in Q2 and 83% in Q3.

Sharp Insight: HBM is no longer just a product; it is a bottleneck. In an AI gold rush, the company controlling the bottleneck controls pricing.

How HBM Works in the AI Supply Chain

Nvidia Designs the GPU

High-end AI accelerators require massive bandwidth.

Micron Supplies the Memory Stack

Micron provides the HBM4 stacks that sit directly next to the GPU.

Hyperscalers Deploy AI Infrastructure

Microsoft, Google, and Meta invest billions in AI infrastructure, driving demand back to Micron.

What This Means for Investors

The Opportunity

If Micron sustains 80%+ margins, its earnings power could far exceed historical levels. Some analysts have raised price targets as high as $571.

The Risk

Markets are already pricing this in. Options pricing implies a 10–12% move after earnings, meaning any weak guidance could trigger a sharp decline.

Common Mistakes Investors Should Avoid

Focusing Only on Q2 Results

Beginners focus on earnings beats. Professionals focus on Q3 guidance.

Ignoring Capex Risk

Micron is spending a record $8 billion on new factories. This is positive if demand remains strong, but risky if AI demand slows.

Micron Earnings: What to Watch Next

Q3 Revenue Guidance

Bulls want $25–$29 billion. Anything below $22 billion signals weakness.

HBM4 Production Update

Watch for confirmation that production aligns with Nvidia’s Vera Rubin rollout.

China Exposure Risk

Any new trade restrictions could negatively impact future earnings.

Sharp Insight: In a sold-out market, the winner isn’t the best seller—it’s the best executor.

The Bottom Line

Micron is in a "sweet spot" where demand is locked in through 2026. The key question is whether it can sustain record-breaking margins.

Watch Q3 guidance closely—that is the real trigger for the next move in the stock.

FAQs

Is Micron a buy before earnings?

This is not financial advice. Options markets suggest a 10–12% move, indicating high volatility risk.

Why is HBM more expensive than regular memory?

HBM is complex, supply-constrained, and essential for AI workloads—allowing premium pricing.

Who are Micron’s competitors?

The HBM market includes SK Hynix, Samsung, and Micron.

What is the Vera Rubin platform?

It is Nvidia’s next-generation AI architecture relying on HBM4 memory.

What happens if AI demand slows?

Micron’s aggressive $8B capex could pressure margins and future earnings.